在數位時代,自動字幕已成為影片內容不可或缺的一部分。它不僅能提升觀眾的理解體驗,而且對於影片的無障礙存取和國際傳播也至關重要。.

但核心問題仍然存在: “「自動字幕的準確度如何?”字幕的準確性直接影響訊息的可信度和傳播效果。本文將透過分析最新的語音辨識技術、不同平台間的比較數據以及使用者體驗,探討自動字幕的真實表現。我們也將分享Easysub在提升字幕品質方面的專業知識。.

目錄

自動字幕技術如何運作?

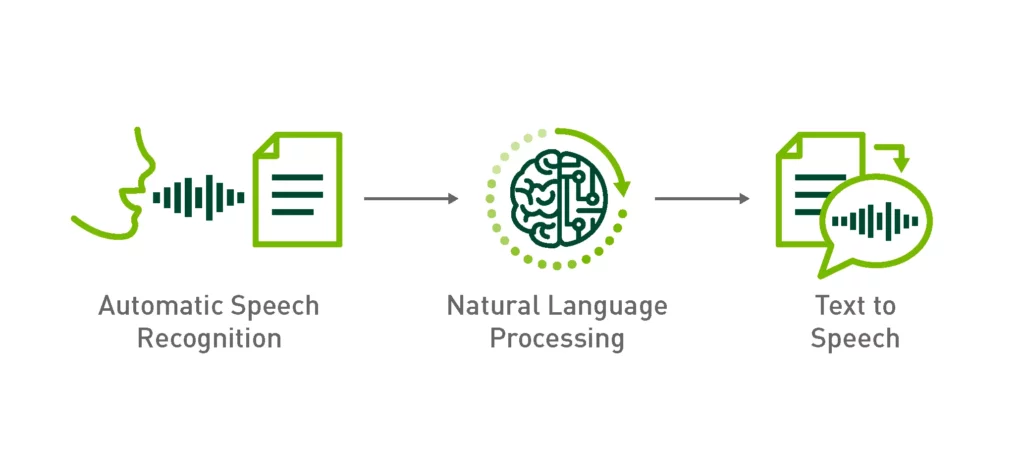

要理解“自動字幕有多準確?”,首先必須掌握 自動字幕是如何產生的. 自動字幕的核心依賴自動語音辨識 (ASR) 技術,該技術使用人工智慧和自然語言處理模型來 將語音內容轉換為文字.

1.基本流程

- 音訊輸入:系統接收來自視訊或直播的音訊訊號。.

- 語音辨識(ASR):利用聲學模型和語言模型將語音分割並識別為單字或字元。.

- 語言理解:一些先進的系統結合了上下文語義來減少由同音詞或重音引起的錯誤。.

- 字幕同步:產生的文字會自動與時間軸對齊,形成可讀的字幕。.

2. 主流技術思路

- 傳統的 ASR 方法:依賴統計和聲學特徵,適合標準語音,但在複雜環境下準確率有限。.

- 深度學習和大型語言模型 (LLM) 驅動的 ASR:利用神經網路和上下文推理,這些模型可以更好地識別口音、多語言語音和自然對話,代表了當前自動字幕技術的主流方向。.

3. 技術限制

- 背景噪音、多人對話、方言和過快的語速都會影響辨識準確性。.

- 現有技術仍難以在所有場景下實現接近 100% 的精度。.

作為專注於字幕生成和優化的品牌,, 易訂閱 在實際應用中融合深度學習和後處理機制,在一定程度上減少錯誤,為用戶提供更高品質的字幕解決方案。.

測量自動字幕的準確性

在討論「自動字幕有多準確」時,我們需要一套科學的測量標準。字幕的準確性並非僅僅在於“看起來有多接近”,而需要明確的評估方法和指標。.

這是最常用的指標,計算方法如下:

WER = (替換數 + 刪除數 + 插入數)/總字數

- 替換:錯誤辨識一個字。.

- 刪除:遺漏了應該被辨識的單字。.

- 插入:增加一個不存在的額外單字。.

例如:

- 原句:“我喜歡自動字幕。”

- 識別結果:“我喜歡自動字幕。”

這裡,替換“愛” 和 “喜歡” 是錯誤的替換。.

2. SER(句子錯誤率)

以句子級別進行衡量,字幕中的任何錯誤都算是整句錯誤。這種更嚴格的標準通常用於專業場合(例如法律或醫學字幕)。.

3. CER(字元錯誤率)

特別適合評估漢語、日語等非語音語言的準確率。其計算方法與WER類似,但以「字」為基本單位。.

4. 準確性與可理解性

- 準確性:指辨識結果與原文逐字對照的準確率。.

- 可理解性:即使出現少量錯誤,字幕是否仍能讓觀眾理解。.

例如:

- 識別結果:「我喜歡自動字幕。」(拼字錯誤)

儘管 WER 表明存在錯誤,但觀眾仍然可以理解其含義,因此在這種情況下「可理解性」仍然很高。.

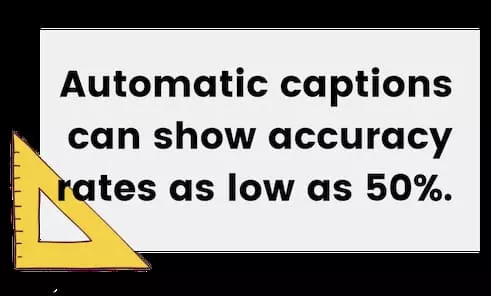

在業界內, 95% WER準確率 被認為相對較高。然而,對於法律、教育和專業媒體等場景, 準確率接近99% 通常需要滿足需求。.

相比之下,像 YouTube 的自動字幕這樣的常見平台可以達到更高的準確率。 60% 和 90% 之間, ,取決於音頻品質和說話條件。專業工具,例如 易訂閱, 而將AI優化與自動辨識後的後期編輯結合,大大降低了錯誤率。.

影響自動字幕準確性的因素

在回答「自動字幕的準確度如何?」這個問題時,字幕的準確性受到技術本身以外的多種外部因素的影響。即使是最先進的人工智慧語音辨識模型,在不同環境下的效能也會表現出顯著的差異。主要影響因素如下:

因素 1. 音訊品質

- 背景噪音:吵雜的環境(例如街道、咖啡館、現場活動)會幹擾辨識。.

- 錄音設備:高品質麥克風可捕捉更清晰的語音,進而提高辨識率。.

- 音訊壓縮:低位元率或有損壓縮會降低聲音特徵,從而降低辨識效果。.

因素2. 揚聲器特性

- 口音變化:不標準的發音或地方口音會嚴重影響辨識。.

- 語速: 語速過快容易造成遺漏,語速過慢又可能打亂語境。.

- 發音清晰度:發音含糊不清或不清楚會為辨識帶來更大的挑戰。.

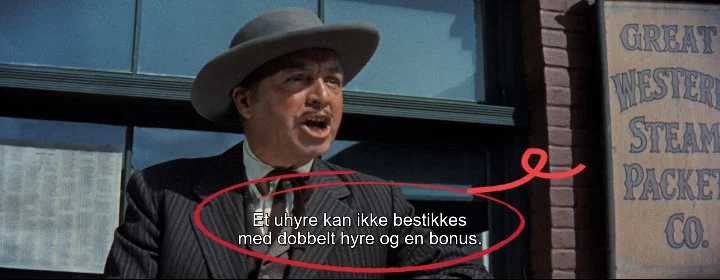

因素3.語言和方言

- 語言多樣性:主流語言(例如英語、西班牙語)通常具有更成熟的訓練模式。.

- 方言和少數民族語言:往往缺乏大規模語料庫,導致準確率明顯較低。.

- 程式碼轉換:當一個句子中多種語言交替出現時,經常會出現辨識錯誤。.

因素 4. 場景和內容類型

- 正式場合:例如線上課程或講座,音質好,語速適中,辨識率較高。.

- 隨意交談:多方討論、打斷、重疊講話增加了難度。.

- 技術術語如果模型沒有接受過相關訓練,則醫學、法律和技術等領域常用的專業術語可能會被錯誤識別。.

因素5.技術與平台差異

平台嵌入式字幕(例如 YouTube、Zoom、TikTok)通常依賴適合日常使用的通用模型,但其準確性仍然不一致。.

專業字幕工具(例如,, 易訂閱) 將後處理最佳化與辨識後的人工校對結合,在吵雜環境和複雜情境下提供更高的準確率。.

跨平台自動字幕準確度比較

| 平台/工具 | 精度範圍 | 優勢 | 限制 |

|---|---|---|---|

| YouTube | 60% – 90% | 涵蓋範圍廣,支援多語言,有利於創作者 | 口音、噪音或技術術語的錯誤率高 |

| Zoom / Google Meet | 70% – 85% | 即時字幕,適合教育和會議 | 多說話者或多語言場景中的錯誤 |

| 微軟團隊 | 75% – 88% | 整合到工作場所,支援即時轉錄 | 非英語能力較差,難以理解專業術語 |

| TikTok/Instagram | 65% – 80% | 快速自動生成,非常適合短視頻 | 優先考慮速度而非準確性,經常出現拼字錯誤/誤認 |

| Easysub(專業工具) | 90% – 98% | AI+後製編輯,擅長多語言、技術內容,準確率高 | 與免費平台相比可能需要投資 |

如何提高自動字幕的準確率?

雖然近年來自動字幕的準確率已經有了顯著的提高,但在實際應用中實現更高品質的字幕仍需要多方面的優化:

- 提高音訊品質:使用高品質麥克風並盡量減少背景噪音是提高辨識準確率的基礎。.

- 優化說話風格:保持適度的語速和清晰的發音,避免多人同時打斷或重疊講話。.

- 選擇合適的工具:免費平台可以滿足一般需求,但 專業字幕工具 (例如 Easysub)建議用於教育、商業或專業內容。.

- 人機混合校對:自動產生的字幕製作完成後,進行手動審核,確保最終字幕達到100%準確率。.

自動字幕的未來趨勢

自動字幕正朝著更精準、更聰明、更個人化的方向快速發展。隨著深度學習和大型語言模型 (LLM) 的進步,系統將在各種口音、鮮為人知的語言和嘈雜環境中實現更穩定的識別。它們還能自動糾正同音異義詞,識別專業術語,並基於語境理解識別行業特定詞彙。同時,工具將更能理解使用者:區分說話者、突出重點、根據閱讀習慣調整顯示,並為直播和點播內容提供即時多語言字幕。與編輯軟體和直播/平台的深度整合也將實現近乎無縫的「生成-校對-發布」工作流程。.

沿著這條進化路徑,, 易訂閱 其定位是將「免費試用+專業升級」整合成一個完整的工作流程:更高的識別準確率、多語言翻譯、標準格式匯出以及團隊協作。它不斷融入最新的AI能力,服務於創作者、教育工作者和企業的全球傳播需求。簡而言之,自動字幕的未來不僅僅是“更精準”,而是“更貼合你”——從輔助工具進化為智慧傳播的基礎設施。.

立即開始使用 EasySub 來增強您的視頻

在內容全球化和短視訊爆炸性成長的時代,自動字幕已成為提高視訊可見度、可近性和專業性的關鍵工具。.

有了這樣的AI字幕生成平台 易訂閱, ,內容創作者和企業可以在更短的時間內製作出高品質、多語言、準確同步的視訊字幕,大大提高觀看體驗和分發效率。.

在內容全球化和短影片爆炸性成長的時代,自動字幕製作已成為提升影片可見度、可近性和專業度的關鍵工具。透過 Easysub 等 AI 字幕生成平台,內容創作者和企業能夠在更短的時間內製作出高品質、多語言、精準同步的影片字幕,從而顯著提升觀看體驗和發行效率。.

無論您是新手還是經驗豐富的創作者,Easysub 都能加速並增強您的內容創作。立即免費試用 Easysub,體驗 AI 字幕的高效智能,讓每個影片都能跨越語言界限,觸達全球受眾!

只需幾分鐘,即可讓 AI 為您的內容賦能!

👉 點此免費試用: easyssub.com

感謝您閱讀本部落格。. 如有更多問題或客製化需求,請隨時與我們聯繫!